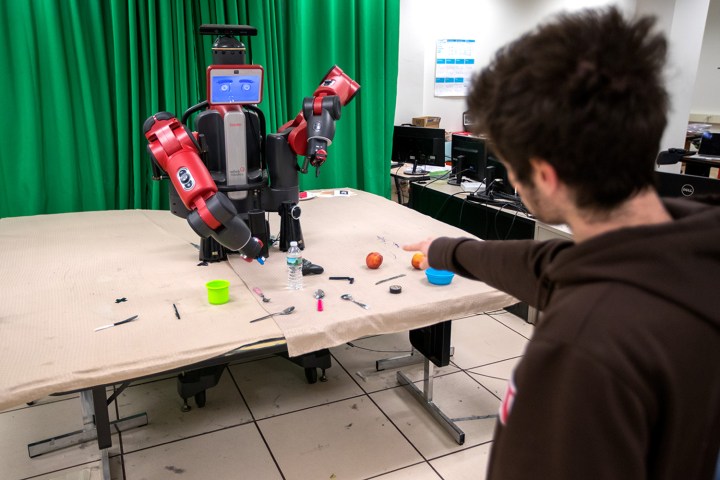

A team of researchers from Brown University are working to instill this social competence into robots, recently developing an algorithm that allows them to perform tasks better by collaborating with people.

In the study, conducted by Brown’s Humans to Robots Laboratory, the robots were programmed to ask for clarification. When a person told the robot to grab a certain object, the robot could ask “Which one?” if the command was ambiguous. This communication allowed the robot to perform tasks 25 percent faster and 2.1 percent more accurate than a state-of-the-art baseline, according to the research, which will be presented at the International Conference on Robotics and Automation in Singapore this spring.

The goal for the Brown lab at this point is less about creating flawless machines than it is about creating machines that can catch and correct their mistakes.

“It is very hard to go from 90 percent accuracy to 99.9 percent accuracy,” Stefanie Tellex, computer science professor and lead researcher, told Digital Trends. “Yet 90 percent accuracy means the robot will fail on one in ten interactions. If it is interacting with you every day, that means it will fail every single day. With our technology though, the robot will automatically detect the failure and ask questions.

“Using this feedback loop, a failure isn’t the end of the story,” she added. “It can ask a question, and recover.”

The question-asking algorithm is a step forward for Tellex and her team, which previously created an algorithm that could respond to verbal and gesture commands from people. However, the new system does becomes confused when asked to retrieve an object among many other similar objects on the table. The algorithm still worked surprisingly well for untrained participants though, who even assumed it could understand and respond to complex phrases that it wasn’t programmed to comprehend.

“We envision robots assisting astronauts in space,” Tellex said, pointing out that the research was funded in part by NASA. She also thinks these machines could help workers here on Earth from the assisting patients at hospitals to people at home.

Editors' Recommendations

- Evolving, self-replicating robots are here — but don’t worry about an uprising

- Vector the social robot has good news for its human buddies