Both AMD and Nvidia make some of the best graphics cards on the market, but it’s hard to deny that Nvidia is usually in the lead. I don’t just mean the vast difference in market share. In this generation, it’s Nvidia that has the behemoth GPU that’s better than all the other cards, while AMD doesn’t have an answer to the RTX 4090 just yet.

Another thing that AMD doesn’t have a strong answer to right now is artificial intelligence. Even though I’m switching to AMD for personal use, it’s difficult to ignore the facts: Nvidia is winning the AI battle. Why is there such a marked difference, and will this become more of a problem for AMD down the line?

It’s not all about gaming

Most of us buy graphics cards based on two things — budget and gaming capabilities. AMD and Nvidia both know that the vast majority of their high-end consumer cards end up in gaming rigs of some sort, although professionals pick them up too. Still, gamers and casual users make up the biggest part of this segment of the market.

For years, the GPU landscape was all about Nvidia, but in the last few generations, AMD made big strides — so much so that it trades blows with Nvidia now. Although Nvidia leads the market with the RTX 4090, AMD’s two RDNA 3 flagships (the RX 7900 XTX and RX 7900 XT) are powerful graphics cards that often outperform similar offerings from Nvidia, while being cheaper than the RTX 4080.

If we pretend that the RTX 4090 doesn’t exist, then comparing the RTX 4080 and 4070 Ti to the RX 7900 XTX and XT tells us that things are pretty even right now; at least as far as gaming is concerned.

And then, we get to ray tracing and AI workloads, and this is where AMD drops off a cliff.

There’s no way to sugarcoat this — Nvidia is simply better at running AI-generated tasks than AMD is right now. It’s not really an opinion, it’s more of a fact. This is also not the only ace up its sleeve.

Tom’s Hardware recently tested AI inference on Nvidia, AMD, and Intel cards, and the results were not favorable to AMD at all.

To compare the GPUs, the tester benchmarked them in Stable Diffusion, which is an AI image creator tool. Read the source article if you want to know all the technical details that went into setting up the benchmarks, but long story short, Nvidia outperformed AMD, and Intel Arc A770 did so poorly that it barely warrants a mention.

Even getting Stable Diffusion to run outside of an Nvidia GPU seems to be quite the challenge, but after some trial and error, the tester was able to find projects that were somewhat suited to each GPU.

After the testing, the end result was that Nvidia’s RTX 30-series and RTX 40-series both did fairly well (albeit after some tweaking for the latter). AMD’s RDNA 3 line also held up well, but the last-gen RDNA 2 cards were fairly mediocre. However, even AMD’s best card was miles behind Nvidia in these benchmarks, showing that Nvidia is simply faster and better at tackling AI-related tasks.

Nvidia cards are the go-to for professionals in need of a GPU for AI or machine learning workloads. Some people may buy one of the consumer cards and others may pick up a workstation model instead, such as the confusingly named RTX 6000, but the fact remains that AMD is often not even on the radar when such rigs are being built.

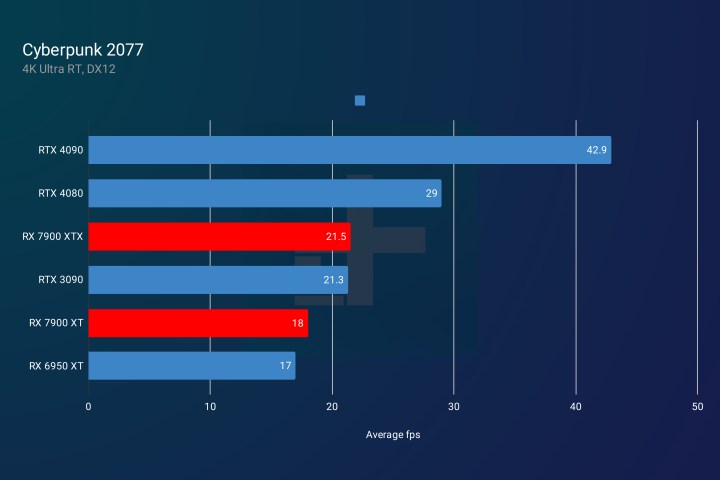

Let’s not gloss over the fact that Nvidia also has a strong lead over AMD in things like ray tracing and Deep Learning Super Sampling (DLSS). In our own benchmarks, we found that Nvidia still leads the charge in ray tracing over AMD, but at least Team Red seems to be making steps in the right direction.

This generation of GPUs is the first one where the ray tracing gap is closing. In fact, AMD’s RX 7900 XTX outperforms Nvidia’s RTX 4070 Ti in that regard. However, Nvidia’s Ada Lovelace GPUs have another edge in the form of DLSS 3, a technology that copies entire frames, instead of just pixels, using AI. Once again, AMD is falling behind.

Nvidia has a long history of AI

AMD and Nvidia graphics cards are vastly different on an architectural level, so it’s impossible to compare them completely. However, one thing we do know is that Nvidia’s cards are optimized for AI in terms of their very structure, and this has been the case for years.

Nvidia’s latest GPUs are equipped with Compute Unified Device Architecture (CUDA) cores, whereas AMD cards have Compute Units (CUs) and Stream Processors (SPs). Nvidia also has Tensor Cores that aid the performance of deep learning algorithms, and with Tensor Core Sparsity, they also help the GPU skip unnecessary computations. This reduces the time the GPU needs to perform certain tasks, such as training deep neural networks.

CUDA cores are one thing, but Nvidia has also created a parallel computing platform by the same name, which is only accessible to Nvidia graphics cards. CUDA libraries allow programmers to harness the power of Nvidia GPUs in order to run machine learning algorithms much faster.

The development of CUDA is what really sets Nvidia apart from AMD. While AMD didn’t really have a good alternative, Nvidia invested heavily in CUDA, and in turn, most of the AI progress in the last years was made using CUDA libraries.

AMD has done some work on its own alternatives, but it’s fairly recent when you compare it to the years of experience Nvidia has had. AMD’s Radeon Open Compute platform (ROCm) lets developers accelerate compute and machine learning workloads. Under that ecosystem, it has launched a project called GPUFORT.

GPUFORT is AMD’s effort to help developers transition away from Nvidia cards and onto AMD’s own GPUs. Unfortunately for AMD, Nvidia’s CUDA libraries are much more widely supported by some of the most popular deep learning frameworks, such as TensorFlow and PyTorch.

Despite AMD’s attempts to catch up, the gap only grows wider each year as Nvidia continues to dominate the AI and ML landscape.

Time is running out

Nvidia’s investment in AI was certainly sound. It left Nvidia with a booming gaming GPU lineup alongside a powerful range of cards capable of AI- and ML-related tasks. AMD is not quite there yet.

Although AMD seems to be trying to optimize its cards on the software side with yet-unused AI cores on its latest GPUs, it doesn’t have the software ecosystem that Nvidia has built up.

AMD plays a crucial role as the only serious competitor to Nvidia, though. I can’t deny that AMD has made great strides both in the GPU and CPU markets over the past years. It managed to climb back out of irrelevance and become a strong alternative to Intel, making some of the best processors available right now. Its graphics cards are now also competitive, even if it’s just for gaming. On a personal level, I’ve been leaning toward AMD instead of Nvidia because I’m against Nvidia’s pricing approach in the last couple of generations. Still, that doesn’t make up for AMD’s lack of AI presence.

It’s very visible in programs such as ChatGPT that AI is here to say, but it’s also present in countless other things that go unnoticed by most PC users. In a gaming PC, AI works in the background performing tasks such as real-time optimization and anti-cheat measures in gaming. Non-gamers see plenty of AI on a daily basis too, because AI is found in ever-present chatbots, voice-based personal assistants, navigation apps, and smart home devices.

As AI permeates our daily lives more and more, and computers are needed to perform tasks that only increase in complexity, GPUs are also expected to keep up. AMD has a tough task ahead, but if it doesn’t get serious about AI, it may be doomed to never catch up.