If you're looking to buy or build a new gaming PC, then the choice of graphics card is an important one. It can be a tough one to make, too, with not only various manufacturers to pick from but also various versions of each individual graphics card. How do you upgrade your video card if you're not even sure which one you should buy?

Choosing a graphics card is all about learning to read the numbers and determining what's important. Do you need more VRAM or more graphics processing unit (GPU) cores? How important is cooling? What about power draw? These are all the questions we'll answer (and more) as we break down how to find a GPU that's right for you.

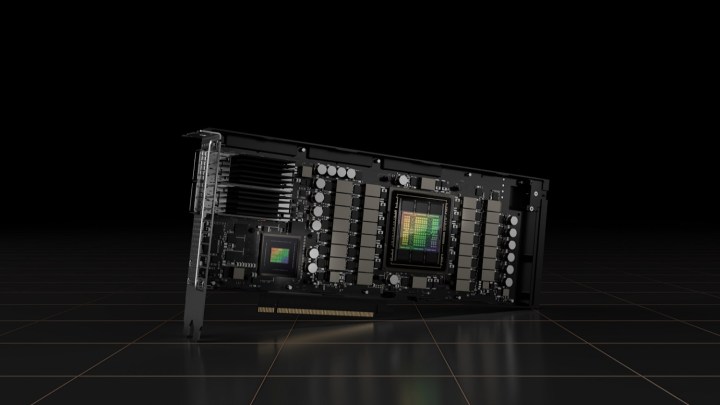

AMD versus Nvidia versus Intel

When it comes to buying a new graphics card, the two main choices are AMD and Nvidia. These two industry giants have the most powerful cards, and even their budget offerings are designed with gaming at HD resolutions in mind. Intel is mostly known for its integrated or onboard GPUs. Bundled along with its processors, those aren’t really designed for gaming in quite the same way. They can do it, but they’re best suited to independent games and older titles.

However, this may soon change, as Intel is set to launch its discrete Intel Arc graphics cards later this year. Starting with laptop solutions, Intel will eventually enter the do-it-yourself (DIY) PC market and offer desktop graphics cards for every builder to check out. If you're planning to build a PC in the later months of 2022, you'll be able to check out Intel Arc Alchemist as a viable alternative to AMD and Nvidia. If you're looking to build your rig right now, your options are still limited to those two manufacturers, but don't worry – there are plenty of GPUs to choose between.

There are more choices to make than branding when it comes to choosing a graphics card, but AMD and Nvidia do have some distinguishing features that are unique to their hardware. Nvidia cards enjoy exclusive support for G-Sync technology and tie in well with GeForce Now, but monitors that only support AMD FreeSync will still work with Nvidia. There’s also deep learning super sampling (DLSS), which has proven itself capable of delivering impressive performance improvements to a growing list of supporting games. Nvidia was the first to support ray tracing, but now, AMD also offers access to that technology. However, Nvidia has had a long headstart when it comes to ray tracing, so your mileage may vary with an AMD Radeon card.

Nvidia also has the most powerful graphics cards available by quite some margin. The flagship RTX 3080 is a 4K behemoth, if you can find one in stock. The RTX 3090 is even more impressive, but it's a lot pricier than some people are willing to spend. There's also the 3090 Ti, a complete beast for gaming and all manner of creative workflows.

That doesn’t mean AMD is down and out, though. Indeed, its high-end graphics cards are capable and hold an important niche in the market. Its GPUs tend to offer slightly greater value for money in most sectors of the market, though its feature set is arguably weaker. It offers support for Freesync frame syncing (a comparable technology to G-Sync), as well as image sharpening and other visual enhancements, which can make games look better for almost no additional cost in resources. AMD also utilizes FidelityFX Super Resolution 2.0 and Radeon Super Resolution for image upscaling.

Ultimately, when it comes to picking a GPU, it is useful to consider whether your monitor supports Freesync or G-Sync and whether any of the companion features of these companies’ graphics cards can help you. For most, price and performance will be more important considerations.

CUDA cores and streaming processors

Although CPUs and graphics cards have processor “cores” at their heart, their tasks are different, so the number of them is different, too. CPUs have to be powerful, general-purpose machines, while GPUs are designed with masses of parallel – yet simple – calculations at any one time. That’s why CPUs have a handful of cores and GPUs have hundreds or thousands.

More is usually better, though there are other factors at play that can mitigate that. A card with slightly fewer cores might have a higher clock speed (more on that later), which can boost its performance even above that of cards with higher core counts – but not typically. That’s why individual reviews of graphics cards and head-to-head comparisons are so important.

In our test of the RTX 3080 Ti and the RTX 3080, the higher-end card was able to output over 100 frames per second (fps) in Battlefield V, but in all fairness, the RTX 3080 was not far behind at an average of 100 fps. We also compared the GPUs to AMD's high-end offering, the Radeon RX 6900 XT, and found that it performed the best of all three at 106 fps.

Called CUDA cores in the case of Nvidia’s GPUs and stream processors on AMD’s cards, GPU cores are designed a little differently depending on the GPU architecture. That makes AMD and Nvidia’s core counts not particularly comparable, at least not purely on a number basis.

Within each product line, however, you can make comparisons. The RTX 3080, for example, comes with 8,704 CUDA cores, while the RTX 3090 has 10,496. By comparison, the 2080 Ti has around 4,300 CUDA cores, half of what the 3080 has. These are two different generations of GPU, however, and just because the 3080 has double the CUDA cores, that doesn’t mean it has double the performance.

Turing CUDA cores — the ones on 20-series GPUs — can handle an integer and floating point calculation simultaneously per clock cycle (FP32 + INT), while Ampere CUDA cores — the ones on 30-series GPUs — can handle double floating point calculations, too (FP32 + FP32). So, although there is a huge theoretical performance increase, the difference in core workload doesn’t make the two generations of GPUs directly comparable.

Nvidia cards now have RT and Tensor cores, too. The RT cores are simple enough, handling hardware ray-tracing with Nvidia’s RTX-branded GPUs. Tensor cores are a little more involved. Nvidia introduced its Tensor cores with Volta, but it wasn’t until Turing — the generation of GPUs including the RTX 2080 — that consumers were able to buy into the new tech. Nvidia has continued to expand on Tensor cores with its Ampere architecture, featured in the RTX 3090 and 3080.

Tensor cores accelerate floating point and integer calculations, but they’re not built equally. First-generation cores on Volta simply handle deep learning with FP16, while second-gen cores support FP32 to FP 16, as well as INT8 and INT4. With the most recent third-gen cores, featured on RTX 30-series GPUs, Nvidia introduced Tensor Float 32, which functions identically to FP32 while speeding up A.I. workloads by up to 20 times.

For these cores, it’s not about the number of them, but rather what generation they’re from. Between RTX 20-series and 30-series GPUs, 30-series cards are better equipped here. We imagine it’ll get more complex as time goes on — Tensor cores aren’t going anywhere — so if you can afford a more recent Nvidia GPU, it’s usually best to stick with one.

VRAM

Just as every PC needs system memory, every graphics card needs its own dedicated memory, typically called video RAM (VRAM) – though that’s a somewhat outdated term that’s been repurposed for its modern, colloquial use. Most commonly, you’ll see memory listed in gigabytes of GDDR followed by a number, designating its generation. Recent GPUs range anywhere from 4GB of GDDR4 to 24GB of GDDR6X, though there are also existing graphics cards with GDRR5. Another memory type, called high bandwidth memory (HBM, HBM2, or 2e), offers higher performance at a greater cost and heat output.

VRAM is an important measure of a graphics card’s performance, though to a lesser extent than core counts. It affects the amount of information that the card can cache ready for processing, which makes it vital for high-resolution textures and other in-game details. If you plan to play medium settings at 1080p, then 4GB of VRAM will suffice for most games, but if you want to kick things up a notch, it will fall short.

If you want to play with higher resolution textures and at higher resolutions, 12GB of VRAM gives you a lot more headroom, and it’s far more future-proof — perfect for when next-generation console games start to make the leap to PC. Anything beyond 12GB is reserved for the most high-end of cards and is only really necessary if you’re looking to play or video edit at 4K or higher resolutions.

GPU and memory clock speed

The other piece in the GPU performance puzzle is clock speed of both the cores and memory. This is how many complete calculation cycles the card can make every second, and it’s where any gap in core or memory count can be closed, in some cases significantly. It’s also where those looking to overclock their graphics card have the biggest impact.

Clock speed is typically listed in two measures: Base clock and boost clock. The former is the lowest clock speed the card should run at, while the boost clock is what it will try to run at when it’s heavily taxed. However, thermal and power demands may not allow it to reach that clock often or for extended periods. For this reason, AMD cards also specify a game clock, which is more representative of the typical clock speed you can expect to reach while gaming.

A good example of how clock speed can make a difference is with the RTX 2080 Super and 2080 Ti. Where the 2080 Ti has almost 50% more cores than the 2080 Super, it’s only 10% to 30% slower, depending on the game. That’s mostly due to the 300MHz+ higher clock speed most 2080 Supers have over the 2080 Ti.

Faster memory helps it, too. Memory performance is all about bandwidth, which is calculated by combining the speed of the memory with its total amount. The faster GDDR6X of the RTX 3080 helps improve its overall bandwidth beyond the RTX 2080 and RTX 2080 Ti by around 20%. There is a ceiling of usefulness, however, with cards like the AMD Radeon VII offering enormous bandwidth but lower gaming performance than a card like the 3080.

When it comes to buying a graphics card, clock speeds should mostly be considered after you have picked a model. Some GPU models feature factory overclocks that can raise performance by a few percentage points over the competition. If good cooling is present, it can be significant.

Cooling and power

A card is only as powerful as its cooling and power draw allow. If you don’t keep a card within safe operating temperatures, it will throttle its clock speed, and that can mean significantly worse performance. It can also lead to higher noise levels as the fans spin faster to try to cool it. Although coolers vary massively from card to card and manufacturer to manufacturer, a good rule of thumb is that those with larger heatsinks and more and larger fans tend to be better cooled. That means they run quieter and often faster.

That can also open up room for overclocking if that interests you. Aftermarket cooling solutions, like bigger heatsinks and water cooling in extreme cases, can make cards run even quieter and cooler. Note that it is much more complicated to change a cooler on a GPU than it is on a CPU.

If you play in headphones, low noise cooling may not be as much of a concern, but it’s still something worth considering when building or buying your PC.

As for power, focus on whether your PSU has enough wattage to support your new card. RealHardTechX has a great chart to find that out. You also need to make sure that your PSU has the right cables for the card you’re planning to buy. There are adapters that can do the job, but they aren’t as stable, and if you need to use one, it’s a good sign your PSU is not up to the task.

If you need a new PSU, these are our favorites.

With everything else considered, budget could be the most important factor. How much should you actually spend on a GPU? This is different for everyone, dependent on how you plan to use it and what your budget is.

Unfortunately, the past couple of years has not been a great time to shop for a graphics card. Due to the ongoing GPU shortage, the best graphics cards on the market are vastly overpriced. As such, the GPU will likely be the most expensive component in your PC by a large margin. That said, here are some generalizations:

- For entry-level, independent gaming and older games, onboard graphics may suffice. Otherwise, anywhere up to $200 on a dedicated graphics card will give you slightly better frame rates and detail settings.

- For solid 60-plus fps 1080p gaming in esports games and older AAA games, expect to spend around $300 to $500.

- For modern AAA games at 1080p or 1440p everywhere else, you’ll likely need to spend closer to $500 to $800.

- 60-plus fps at 1440p in any game or entry-level ray tracing in supporting games will cost you $800 to $1,200.

- 4K gaming, or the most extreme of gaming systems, can cost as much as you’re willing to spend, but somewhere between $1,500 and $2,500 is likely.

Both Intel and AMD make CPUs that include graphics cores on the same chip, typically referred to as integrated graphics processors (IGP) or onboard graphics. They are far weaker than dedicated graphics cards and typically only provide base-level performance for low-resolution and detail gaming. However, there are some that are better than others.

Many current-generation Intel CPUs include UHD 700-series graphics, which make certain low-end games just about playable at low settings. Previous-gen CPUs were equipped with Intel UHD 600-series, which we have tested extensively. In our testing, we found the UHD 620 able to play games like World of Warcraft and Battlefield 4 at low settings at 768p resolution, but it didn’t break 60 fps, and 1080p performance was significantly lower — barely playable.

11-generation graphics, found on Intel’s 10th-generation Ice Lake processors, are much more capable. CPUs equipped with that technology are able to play games like CS:GO at lower settings at 1080p. Anandtech’s testing found that a 64 execution unit GPU onboard the Core i7-1065G7 in a Dell XPS 13 managed over 43 fps in DotA 2 at enthusiast settings at 1080p resolution. We found it a viable chip for playing Fortnite at 720p and 1080p, too.

Intel’s 11th-generation Tiger Lake processors are even more capable. Although a far cry from a dedicated GPU, our Tiger Lake test machine was able to hit 51 fps in Battlefield V and 45 fps in Civilization VI at 1080p with medium settings. The fact that we were able to even dream of 60 fps in Battlefield V on integrated graphics was astounding.

The more recent Ryzen 5000G processors have onboard graphics, too, and they’re impressive. According to some benchmarks, the Ryzen 7 5700G is equipped with one of the fastest integrated GPUs ever and is perfectly sufficient for a less-than-demanding gamer.

As passable as these gaming experiences are, though, you’ll find a much richer, smoother experience with higher detail support and higher frame rates on a dedicated graphics card.