The best laptops and laptop brands are all-in on AI. Even compared to a year ago, the list of the best laptops has been infested with NPUs and a new era of processors, all of which promise to integrate AI into every aspect of our day-to-day lives. Even after a couple of years at this AI revolution, though, there isn’t much to show for it.

We now have Qualcomm’s long-awaited Snapdragon X Elite chips in Copilot+ laptops, and AMD has thrown its hat in the ring with Ryzen AI 300 chips. Eventually, we’ll also have Intel Lunar Lake CPUs. The more we see these processors, however, it becomes clear that they aren’t built for an AI future rather than for the needs of today — and you often can’t have it both ways.

It comes down to space

One important aspect of chip design that doesn’t get talked about enough is space. If you browse hardware forums and enthusiast-focused websites, you’ll already know how important space is.

But for everyone else, it’s not something you normally think about. Companies like AMD and Intel could make massive chips with a ton of computing horsepower — and a ton of power and thermal demands, but that’s a different conversation — but they don’t. A lot of the art of chip design comes down to how much power you can cram into a certain space.

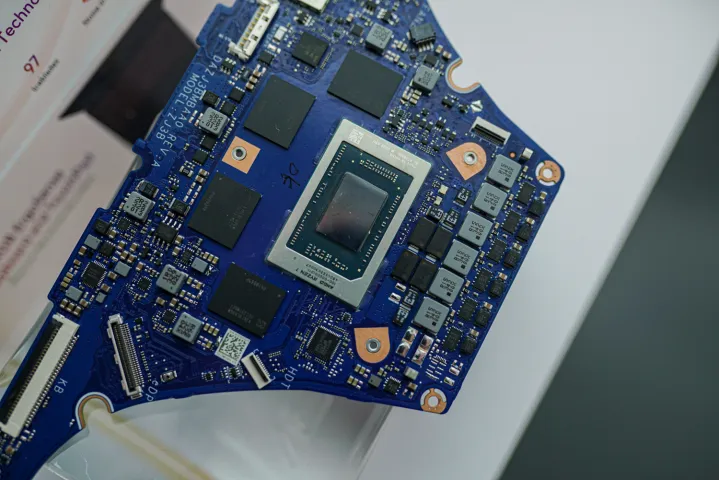

It’s important to understand that because adding hardware onto a chip isn’t free — it means taking space away from something else. For instance, you can see an annotated die shot of a Ryzen AI 300 CPU below. And in the upper right, you can see how much space the XDNA NPU is taking up. It’s the smallest of the three processors on the chip — speculation pins it at around 14mm2 — but it still takes up a lot of space. AMD could use that space for more cores or, more likely, additional L3 Infinity Cache for the GPU.

Annotated! 😁

Funny how the RDNA WGP scalar units keep getting moved around, now back to RDNA1 layout.

The SRAM around the IMC is also present on PHX2 (and presumably PHX1), but seemingly not the desktop IOD or older CPUs/APUs.

Overall… neat, without any major surprises. https://t.co/cf6MZVMgT2 pic.twitter.com/MfPqQRDGcY

— Nemez (@GPUsAreMagic) July 29, 2024

This isn’t to single out AMD or to say that Ryzen AI 300 CPUs perform poorly. They don’t, as you can read in our Asus Zenbook S 16 review. AMD, Intel, and Qualcomm are constantly making trade-offs in design to fit everything they need onto the chip, and it’s not as simple as just throwing more cache on and calling it a day. Pulling one lever moves countless other values, and all of those need to be brought into balance.

But it is a good demonstration that adding an NPU to a chip isn’t something designers can just do without making compromises elsewhere. At the moment, these NPUs are largely useless, as well. Even apps that are accelerated by AI would rather take the horsepower of the integrated GPU, and if you have a discrete GPU, it’s leagues faster than the NPU is. There are some use cases for an NPU, but for the vast majority of people, the NPU really just functions to provide (slightly) better background blur.

Ryzen AI 300 is the only example we have now, but Intel’s upcoming Lunar Lake chips will also be caught in a similar situation. Both AMD and Intel are gunning for Microsoft’s stamp of approval in Copilot+ PCs, and therefore are including NPUs that can reach a certain level of power in order to satisfy Microsoft’s seemingly arbitrary requirements. AMD and Intel were already including AI co-processors on their chips prior to Copilot+ — but those co-processors are basically useless now that we have new, much higher requirements.

It’s impossible to say if AMD and Intel would design their processors differently had it not been for the Copilot+ push. At the moment, however, we have a piece of silicon that doesn’t serve much of a purpose on Ryzen AI 300 and eventually on Lunar Lake. It calls back to Intel’s push with Meteor Lake, which have become all but obsolete in the face of Copilot+ requirements.

Promised AI features

As AMD and Intel have both promised, they’ll eventually be brought into the Copilot+ fold. At the moment, only Qualcomm’s Snapdragon X Elite chips have Microsoft’s stamp of approval, but AMD, at least, says that its chip will be able to access Copilot+ features before the end of the year. That’s the other problem, though — we don’t really have any Copilot+ features.

The star of the show since Microsoft announced Copilot+ has been Recall, and there hasn’t been a single person outside of press that’s been able to use it. Microsoft delayed it, restricted it to Windows Insiders, and by the time Copilot+ PCs were ready to launch, pushed it back indefinitely. AMD and Intel might be brought into the Copilot+ fold before the end of the year, but that doesn’t mean much of anything if we don’t have more local AI features.

We’re seeing some consequences of Microsoft’s influence on the PC industry in action. We have a slate of new chips from Qualcomm and AMD, and soon Intel, all of which have a piece of silicon that isn’t doing a whole lot. It feels rushed, similar to what we saw with Bing Chat, and I wonder if Microsoft is really as committed to this platform as it says. That’s never mind the fact that the thing driving sales of Copilot+ PCs aren’t AI features but better battery life.

In the next few years, it’s estimated that a half-billion AI-capable laptops will be sold, and that by 2027 they’ll make up more than half of all PC shipments. It’s clear why Microsoft and the wider PC industry is pushing into AI so hard. But when it comes to the products we have today, it’s hard to say they’re as essential as Microsoft, Intel, AMD, and Qualcomm would have you believe.

Laying the groundwork

It’s still important to look at the why in this situation, however. We have a classic chicken-and-egg problem with AI PCs, and even with the introduction of Copilot+ and delay of Recall, that hasn’t changed. Intel, AMD, and Qualcomm are trying to lay the groundwork for AI applications that will exist in the future, when, hopefully, they’re so seamless with how we use PCs that we don’t even think about having an NPU. It’s not a crazy idea — Apple has been doing this exact thing for years, and Apple Intelligence feels like the natural progression of that.

That’s not where we’re at right now, though, so if you’re investing in an AI PC, you have to prepare for the consequences of being an early adopter. There aren’t a ton of apps that can leverage your NPU, unless you really go searching, and even in apps with local AI features, they’d prefer to run on your GPU. That’s not to mention some goalpost moving we’ve already seen with Copilot+ and the initial wave of NPUs from AMD and Intel.

I have no doubt that we’ll get there — there’s simply too much money being thrown at AI right now for it not to become a mainstay in PCs. I’m still waiting to see when it’s truly as essential as we’ve been led to believe, though.